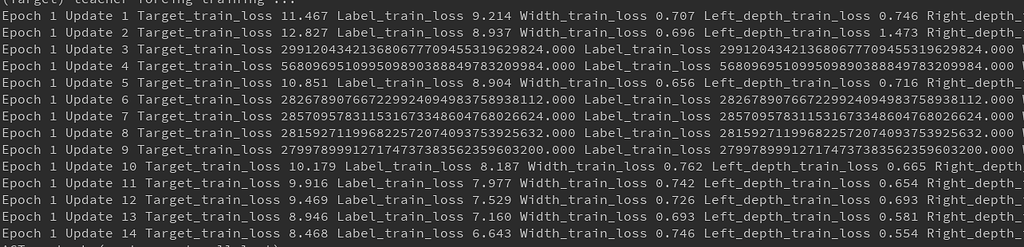

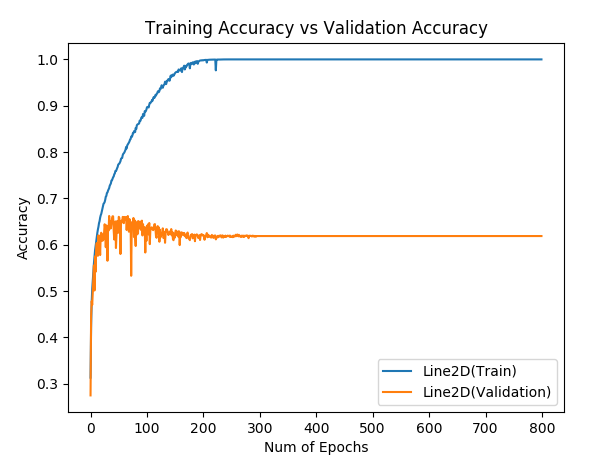

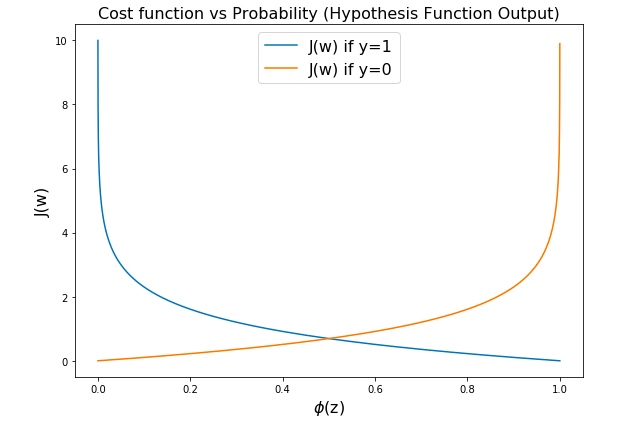

There are three types of loss functions in PyTorch: □ Pro tip: Check out V7 Model Training to learn more. To learn more about the standard coding practices around writing a neural network training pipeline code, I would strongly recommend referring to Pytorch Image Models' open-source framework to train computer vision models. I would recommend you refer to the source code for these loss functions to get a better understanding of how loss functions are defined internally in PyTorch. Here’s a link to the PyTorch documentation that lists all the predefined loss functions that you can use directly in your neural network training code. While writing the forward pass for training the neural network, you can use the above loss function to pass your inputs and outputs to get a loss scalar value that is further used for backward propagation. Remember, you can also write a custom loss function definition based on your application and use it instead of using an inbuilt PyTorch loss function. Here, we will use cross-entropy loss, for example, but you can use any loss function from the library.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed